A message that has been hidden through the use of a code is automatically of greater interest than one that can be instantly read.

Edward Elgar’s expertise in fashioning and decoding ciphers merits an entire chapter in Craig P. Bauer’s recently published history of cryptography, Unsolved! Elgar’s lifelong obsession with secret codes is a dominant idiosyncrasy of his psychological profile. One would reasonably expect that scholars would scour his Enigma Variations for cryptograms, yet it is shocking they have made no concerted efforts in that direction nor encouraged others to seriously pursue that promising avenue of inquiry. On the contrary, some go so far as to deny the likelihood of “precompositional calculation” in the case of the Enigma Variations, ruling out of hand the possibility Elgar embedded any cryptograms or elaborate counterpoints in his breakout symphonic masterpiece.

Wallowing in their inability to decode Elgar’s contrapuntal riddle, the academic elite has thrown in the collective towel with an almost unanimous disavowal of the prospect of ever discovering the Enigma Variations’ covert melody or any ciphers which could surrender its secrets. My mission is to pick up that discarded towel, saturate it with facts and analysis, whirl it tight and administer a stinging thwack to academia’s posterior to snap them out of their stupor.

If only Elgar scholars had taken a more keen interest in cryptography. Simon Singh explains in The Code Book, “Cracking a difficult cipher is akin to climbing a sheer cliff face: The cryptanalyst is seeking any nook or cranny that could provide the slightest foothold.” One of the greatest vulnerabilities of ciphers is their anomalies, features that seem odd or out of place. In the case of the Enigma Variations, the anomalous Mendelssohn fragments cited in Variation XIII prove to be rich fishing grounds for cryptograms. Extensive research has so far netted seventeen different ciphers associated with those seemingly extraneous melodic droplets from Felix Mendelssohn’s oceanic overture Calm Sea and Prosperous Voyage. These cryptographic discoveries are far from surprising except for those unschooled in that opaque artform or steeped in denial, invoking the phantom of confirmation bias that stalks and fuels their own vacuous criticisms.

The insistence by some that the solution to the Enigma Variations should be obvious betrays a profound ignorance of cryptography, a discipline dedicated to concealing the obvious. Below the sonic surface of Elgar’s calm sea swims a veritable school of cryptograms that escaped the notice of scholars more adept at fishing for excuses than ciphers. It is exciting to report the discovery of an eighteenth cryptogram ensconced within Elgar’s Mendelssohn fragments. It is based on the written major key signatures for transposing instruments tasked with playing the Mendelssohn fragments. The written keys for those transposing instruments encode three letters hinted at by three asterisks in the title of Variation XIII. Those three absent letters turn out to be the initials for the absent Theme’s common three-word title. What follows is a detailed description and decryption of the Mendelssohn Fragments Major Keys Cipher.

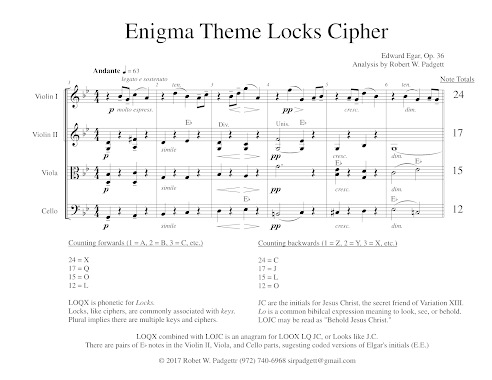

Careful attention was given to the major keys of the transposing instruments assigned to the Mendelssohn fragments because of the prior discovery of the Enigma Locks Cipher. In the first six bars of the Enigma Theme marked off by a conspicuous double bar, Elgar uses the total number of notes played by each section of the string quartet to encode a phonetic spelling of the word Locks as “LOQX”. Elgar's personal correspondence confirms that phonetic spellings are a hallmark of his writing style. This encryption is achieved by an elementary number-to-letter key in which a number is converted into the corresponding letter of the alphabet. That discovery proved decisive because of the realization that locks are opened by keys.

The plural form of locks indicates that multiple keys are required to unlock the Enigma Variations. This fact was born out by the discovery of the Enigma Keys Cipher. The Enigma Theme is played in two keys, the modes of G minor and G major. The letters of the accidentals for those parallel keys (B-flat, E-flat, and F-sharp) are an anagram of the initials for Ein feste Burg, the covert Theme of the Enigma Variations. The Enigma Theme’s key signatures form a virtual key that opens Elgar’s melodic strongbox.

There is another set of keys that deserves greater scrutiny, namely the major key signatures associated with the Mendelssohn fragments in Variation XIII. All of these fragments are performed by transposing instruments that play a written note at a different tone known as concert pitch. In that movement, there are three Mendelssohn fragments quoted by the B-flat clarinet, a transposing instrument that plays a written note a major second lower. The first two Mendelssohn quotations are in A-flat major, and a third in E-flat major. The principal clarinet performs the A-flat major quotations in the written pitch of B-flat major, and the E-flat major quotation in the written pitch of F major. In all, there are three Mendelssohn quotations in the full score performed by the B-flat clarinet in two contrasting major modes. It is hardly coincidental the written keys (B-flat major and F major) of these major Mendelssohn fragments furnish two of the three initials for Ein feste Burg.

One Mendelssohn fragment not explicitly identified by quotation marks in the orchestral score is played in the key of F minor. Three F trumpets and three trombones perform this minor fragment in octaves with the trumpets assigned the upper voice, and the trombones the identical pitches an octave lower. Elgar does not enclose this F minor fragment in quotation marks because it departs from the source melody's original major mode. Similar to the B-flat clarinet, the F trumpet is a transposing instrument that plays a written note a perfect fourth higher in concert pitch. In the case of the F minor fragment, the written pitch for the F trumpet is transposed down a perfect fourth to C minor.

Confining this analysis of the Mendelssohn fragments to parts played by only transposing instruments (the B-flat clarinet and F trumpet), the written keys are B-flat major, F major, and C minor. As previously observed, two of those three letters (B and F) are the second and third initials of Ein feste Burg. The missing E is provided by the relative major key of C minor which is E-flat. The modes of E-flat major and C minor are said to be related because they share the same accidentals in their key signatures — B-flat, E-flat, and A-flat. The original Mendelssohn fragment is written in a major mode, so this invites honing in on just the major written keys of the transposing instruments that play the Mendelssohn fragments. The major modes of the written keys in which the transposing instruments perform the Mendelssohn fragments are B-flat, F, and E-flat. Those key letters are an anagram for the initials of covert Theme’s common three-word title, Ein feste Burg.

One would never detect the Mendelssohn Fragments Major Keys Cipher by merely listening to a performance of Variation XIII in concert pitch. This cryptogram is only discernible when the written parts in the full score are carefully studied. The difference between the written and sounding pitches with a relative major key thrown in for one of the fragments serves to conceal the message from straightforward discovery. A common device used to encode a plaintext message is transposition, a process that involves substituting one letter for another. Elgar pays homage to that enciphering technique with his use of two types of transposing instruments assigned to the Mendelssohn fragments as a way to encipher the initials for the covert Theme using their written major keys. This discovery intersects exquisitely with the Enigma Keys Cipher.

The transposition intervals used by the B-flat clarinet and F trumpet are a major second and a perfect fourth respectively. Those two numbers — 2 and 4 — may be viewed as a coded reference to the twenty-four letters in the covert Theme’s complete German title. The number twenty-four is granted a subtle but perceptible emphasis throughout the Enigma Theme. It is the precise number of melody notes in the Enigma Theme’s opening six bars that encode the complete title of the covert Theme using a musical Polybius box cipher. In 1896 — two years before he embarked on the Enigma Variations — Elgar intently studied the Polybius box cipher as documented in Chapter 3 of Craig P. Bauer’s history of cryptography, Unsolved!

The Mendelssohn fragments are not an anomalous outlier. Contrary to conventional wisdom, they form the very core of Elgar’s cryptographic enigma. This is displayed by his Mendelssohn quotations which strongly imply that Mendelssohn himself quotes the covert Theme just as openly as Elgar quotes him. There are four Mendelssohn fragments in Variation XIII, a figure that targets the exact movement in Mendelssohn’s Reformation Symphony that cites Ein feste Burg. Elgar cites a virtual drop from Mendelssohn’s maritime overture to sonically portray an open sea. With these conspicuous quotations, Elgar hints that one may openly see what is concealed by considering through imitation a famous melody quoted by Mendelssohn. His concert overture Calm Sea and Prosperous Voyage was first performed in 1828, and Mendelssohn started work on his Reformation Symphony the following year in 1829. The close proximity of these works in Mendelssohn’s list of compositions is undoubtedly a clue. Another huge hint is Mendelssohn’s baptism as a Lutheran. Despite this historical fact, there are still some scholars who insist Elgar would never deign to quote the music of a Lutheran on account of his Roman Catholicism.

The three asterisks which form the title of Variation XIII prove to be an exquisite example of Elgar’s use of literature on multiple levels. The word asterisk originates from the Greek word asteriskos which translates as “little star.” Elgar sprinkled numerous references to Dante’s Divine Comedy throughout the Enigma Variations. It is remarkable that each of its three canticles — Inferno, Purgatorio, and Paradiso — concludes with the word stars. For sleuths steeped in medieval Italian poetry, there is a revealing literary connection between Elgar’s three asterisks and Dante’s Divine Comedy.

|

| Variation XIII Autograph Score Star of David Asterisks |

|

| Variation XIII Published Score Star of David Asterisks |

There is yet another literary link between Elgar’s asterisks and Martin Luther, the composer of Ein feste Burg. In 1518 the theologian Johannes Eck wrote a scathing critique of Luther’s Ninety-Five Theses titled Obelisks. At the urging of his friends, Luther penned a defense of his views and gave it the title Asterisks which was first published in 1545. Variation XIII’s cryptic asterisks are an incredibly revealing clue about the composer of the covert Theme. This explains why Elgar first identified Variation XIII with a solitary capital L. That “L” stands for Luther, not Lygon.

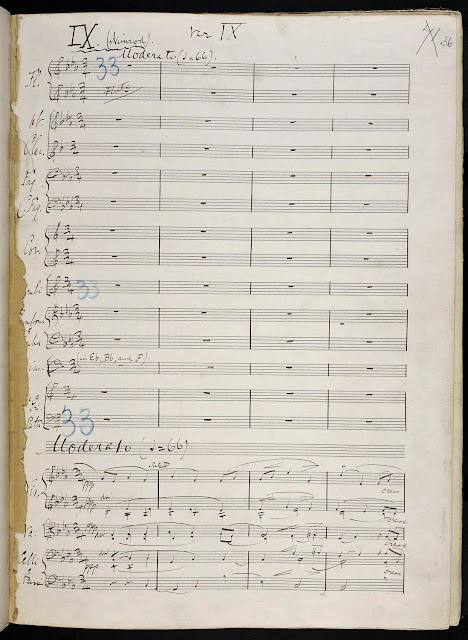

The initials for Elgar’s secretive Theme are enciphered in over a dozen ways in the Enigma Variations with seven localized in the Mendelssohn fragments: The FAE Cipher, Scale Degrees Cipher, Melodic Interval Cipher, Clarinet Nominal Notes Cipher, Clarinet Key Signature Transposition Cipher, Dominant-Tonic-Dominant Cipher, and the Major Keys Cipher described here. The appearance of these identical set of initials in so many diverse ciphers is not evidence of confirmation bias, but rather deliberate construction. An outstanding example is the tuning of the timpani for Variation IX Nimrod at Rehearsal 33 which is shown as E-flat, B-flat, and F. Those three letters are an anagram of the covert Theme’s initials.

The riddle posed by the three asterisks forming the title for Variation XIII is further answered by the first letters of the titles immediately preceding and following that movement. Known as the Letter Cluster Cipher, the first letters of the titles for Variations XII (B. G. N.) and XIV (E. D. U. and Finale) are an anagram of the initials of the covert Theme, Ein feste Burg. This linguistic twist is undoubtedly the reason Elgar used a German derivation of his name (Eduard) for his initials in Variation XIV. The German title for the covert Theme would also explain why Elgar directed his only German friend portrayed in the Enigma Variations, August Jaeger, to pencil in the word Enigma on the original score. The term Enigma is spelled the same in English and German.

There are other conspicuous appearances of the letters E, F and B on the full score of the Enigma Variations. On the first and final pages of the Master Score, Elgar wrote the “FEb” as an abbreviation for the month February. Elgar capitalized the E in “FEb” because it is the first letter in the covert Theme’s title.

On the last page of the appended Finale completed in July 1899, Elgar again incorporated those same three letters as an acrostic anagram based on the first of three words written in close proximity to one another. Near the word Fine, Elgar signed his name and noted the location as Birchwood Lodge. The first letters of these three adjacent terms (Edward Elgar, Fine, and Birchwood Lodge) are an anagram of the covert Theme’s initials.

Elgar places a subtle emphasis on the number six throughout the Enigma Variations. In recognition of the importance of this number, it is fascinating to observe the quotation marks at the outset of each major Mendelssohn fragment closely resemble two sixes. This appears to be a coded suggestion to direct one’s attention to the number six, or in this particular context, a melodic sixth. The notes where the clarinet descends by a melodic sixth furnish the initials for Ein feste Burg. In the case of the first A-flat major solo, the clarinet descends by a melodic sixth from a concert C to E-flat followed by an F and G. The E-flat and F are the first two initials, something suggested by two statements of the A-flat Mendelssohn fragment. With the E-flat major solo, the clarinet descends again by a melodic sixth from a concert G to B-flat followed by a C before cadencing on D. The B-flat is the third initial, something implied by only one statement of the Mendelssohn fragment in E-flat major. The same place in the A-flat and E-flat major clarinet solos prefaced by the Mendelssohn quotations where the melody drops by a melodic sixth furnish the same three note letters encoded by other cryptograms in the Enigma Variations.

It has been shown how the major key signatures of the written pitches of transposing instruments granted the special task of performing the Mendelssohn fragments encode the initials for Ein feste Burg. What has remained unnoticed until now is that the note letters E, F, and B also appear sequentially in the clarinet solos. The second through fifth notes of the clarinet solo in E-flat major are F, two E-flats, and B-flat. The note letters on either side of the composer's melodic initials transparently conceal the initials for the covert Theme. This is the case because the unique letters F, E, and B are the initials for Ein feste Burg. They appear in order as FEB, the same letters written twice on the cover of the original full score and a third time on the final page. The two E-flats are a transparent reference to the composer’s initials which appear in numerous cipher decryptions. The Enigma Psalm Cipher is just one of numerous examples.

The Mendelssohn fragments are like a thread that, when pulled, unravels an elaborate tapestry of intersecting music ciphers. Their decryptions reveal and authenticate the covert Theme of the Enigma Variations, and the identity of the secret friend memorialized in Variation XIII. Unfortunately for the scions of academia, they missed the proverbial boat in their blanket dismissals of the Mendelssohn fragments as extraneous with no obvious connection to the core secrets of the Enigma Variations. Now that ship has sailed and they have missed fortune’s tide immortalized in Shakespeare’s masterpiece Julius Caesar. To learn more about the secrets of Elgar’s Enigma Variations, read my free eBook Elgar’s Enigmas Exposed. Please help support and expand my original research by becoming a sponsor on Patreon.

%20Autograph%20Score%20Final%20Page.png)

1 comment:

I just happen to stumble upon this article, and i must say, from the very first sentence it already had me curious. This is a great read,and fun. Thank you so much.

https://musicadvisor.com/f-major-scale/

Post a Comment